Securing LLM-Backed Systems: A Guide to CSA’s Authorization Best Practices

How Command Zero secures LLM-backed systems

Introduction

The generative AI gold rush is in full swing, transforming how we build software. Like emerging technologies of the past, most engineering teams are building on shifting sand when it comes to security. While most of the core principles of software/infrastructure security are still applicable to AI, LLMs bring unique challenges to the mix for enterprises.

The good news is, as an industry we’re putting in deliberate effort to prevent history from repeating itself. There are multiple industry consortiums, non-profits and working groups focused on AI security. The Cloud Security Alliance hosts one of the leading technical committees for AI security with the AI Technology and Risk Working Group.

In this post, we dissect the Cloud Security Alliance's Securing LLM Backed Systems guidance and share how Command Zero implements these controls to secure our LLM-backed systems.

Securing LLM Backed Systems: Essential Authorization Practices

Considering the growing adoption of Large Language Models (LLMs), the Cloud Security Alliance (CSA) has released multiple guides to address the growing need for formal guidance on the unique security challenges of integrating large language models (LLMs) into modern systems. Securing LLM Backed Systems was released in August 2024 as part of this effort.

This guide offers strategic recommendations for ensuring robust authorization in LLM-backed systems and serves as a valuable resource for engineers, architects, and security experts aiming to navigate the complexities of integrating LLMs into existing infrastructures.

This blog post is a structured summary of its core recommendations, with introductions explaining the purpose and controls for each section. As this is a very high-level summary, it doesn’t share the detailed implementation guidance found on the document.

Core Principles

The aim of these tenets is to establish core principles that guide secure LLM system design, with an emphasis on separating authorization logic from the AI model. While the original document has five principles, these can be summed up into the following three:

- Control authorization

- Ensure authorization decision-making and enforcement occur external to the LLM to guarantee consistent application of security policies.

- Apply the principles of least privilege and need-to-know access to minimize potential damage from breaches.

- Authorization controls are similar to traditional authorization controls, the only caveat being that they need to cover the systems as potential actors in this context as well.

- Validate all output

- Evaluate LLM output depending on the business use case and the risks involved.

- Input and output validations are similar to traditional input/output sanitization. Where it is different is that output needs to be checked for hallucinations and drift, both of which are expected from non-deterministic systems.

- Remain aware of the evolving threat landscape

- Implement system wide validation for all inputs and outputs. Design with the assumption that novel prompt injection attacks are always a threat.

- This is similar to input and access controls for software systems. The potential impact of threats targeting LLMs is more devastating due to the fluid nature of these systems.

Key System Components

This section aims to highlight essential subsystems within LLM-based architectures, which necessitate specialized security measures to avert unauthorized access and data exposure.

Orchestration Layer

- This component is where actions are coordinated with the LLM including inputs and outputs. This commonly connects the LLM to external services.

- Control: Isolate authorization validation to prevent compromised or untrusted data from influencing system functions.

Vectorized Databases

- These are commonly used alongside LLMs and are effective and managing vector embeddings of data.

- Control: Enforce strict access controls to protect sensitive vectorized data from unauthorized access.

Data Validation

- Implement validations in layers to provide multiple lines of defense.

- Control: Ensure you have deterministic checks in place as a primary safeguard.

Recognizing and Addressing Critical Challenges

The goal of this section is to describe the key security risks that need focused mitigation strategies.

Prompt Manipulation

- Prevent malicious LLM behavior by keeping system data and user prompts in separate contexts.

- Control: Validate and sanitize all outputs and enforce strict input controls.

Risks of Fine-Tuning

- Poisoned training data can lead to unexpected vulnerabilities in LLM systems.

- Control: Perform consistent integrity checks on training data and implement real-time output monitoring.

Architecting Secure LLM Systems

This section is designed to show secure implementations of common LLM architectures.

Retrieval Enhanced Generation (RAG)

- Augment the capabilities of LLMs by combining them with external data retrievals.

- Control: Enforce strict authorization policies with data queries while restricting database access.

System Code Execution by LLMs

- Where systems allow for code to be written and executed by LLMs.

- Control: Implement separate secured environments for code execution to minimize possible impacts.

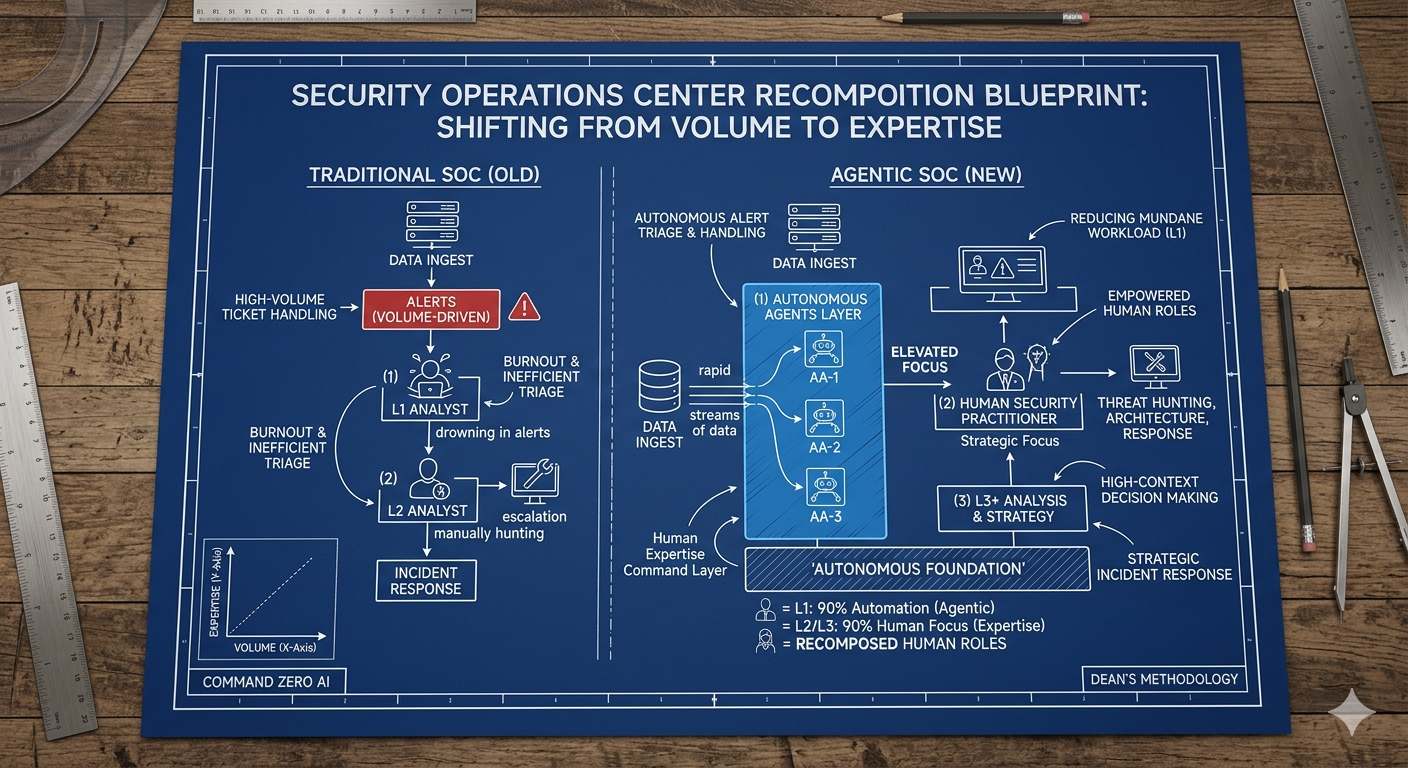

How Command Zero secures LLM-backed systems

Command Zero combines algorithmic techniques and Large Language Models to deliver the best experience for tier-2+ analysis. The platform comes with technical investigation expertise in the form of questions. And the LLM implementation delivers autonomous and AI-assisted investigation flows. In technical terms, LLMs are used for selecting relevant questions and pre-built flows, natural language-based guidance, summarization, reporting and verdict determination.

Our product and engineering teams incorporate AI best practices including the AI Security thought leadership published by Cloud Security Alliance. Specifically, here is how Command Zero adheres to CSA’s AI guidelines:

- To ensure customer privacy, we don't use customer data to train models.

- To ensure explainability, we share context justification for all decisions made by LLMs in the platform.

Controls for input and system interactions

- The platform controls and structures all input provided to models, reducing/removing potential jailbreak and prompt injection attacks.

- Retrieval-Augmented Generation for vector search/retrieval is limited to internal knowledge bases and generated reports.

- Users cannot directly interact with LLMs. Models have no tool calling capability that exposes access to external systems

Controls for securing system output

- The platform enforces structure and content of the model's output, eliminating or minimizing non-deterministic results.

- Manual and automated methods validate and challenge results, further eliminating or reducing hyperbole and hallucinations.

Final Thoughts

As LLMs become part of every piece of software we use every day, securing them becomes more critical than ever. Securing LLM-backed systems requires a comprehensive approach that focuses on controlling authentication, input and output. At the core of this strategy is the careful management of system interactions.

By implementing strict controls and structures for all input provided to models, organizations can significantly reduce the risk of jailbreak and prompt injection attacks. A key component of this approach is limiting Retrieval-Augmented Generation (RAG) to internal knowledge bases and generated reports, ensuring that vector search and retrieval processes remain within controlled boundaries.

Another crucial aspect of securing LLM-backed systems is the elimination of direct user interactions with the models. By removing tool calling capabilities that could potentially expose access to external systems, the platform maintains a tight grip on security. On the output side, enforcing structure and content constraints on the model's responses helps minimize non-deterministic results. This is further enhanced by implementing both manual and automated methods to validate and challenge results, effectively reducing instances of hyperbole and hallucinations.

We appreciate the thought leadership content delivered by the AI Technology and Risk Working Group with the CSA. We highly recommend checking out CSA’s AI Safety Initiative for the document referenced in this post and more.

Subscribe security updates

Run Better Investigations.

At Every Tier.